|

We started with aggressive values for TCP_USER_TIMEOUT and keepalives, bumped it up until we stopped seeing Connection timed out errors, and added a small buffer on top.

So, does changing TCP_USER_TIMEOUT actually help? Obviously, this will depend on the network conditions so it will require tuning by trial and error while keeping in mind that TCP_USER_TIMEOUT interacts with other TCP parameters. In our use case, we want to drop unresponsive connections early while giving struggling connections enough of a window to retry. So, what value should we use for TCP_USER_TIMEOUT? Well, It depends. For sake of completeness, we will also cover this interaction, how each parameter can be set, and how these parameters work. There is an excellent article by Marek Majkowski that describes this interaction quite nicely. This is exactly what we want but there is a catch, setting TCP_USER_TIMEOUT overrides a set of other TCP settings such as keepalives and tcp_syn_retries so we have to pick the value carefully. Luckily, libpq-based clients allow the developer to set TCP_USER_TIMEOUT on individual connections, this timeout will put a cap on how many retransmissions are done before killing the connection. Under Linux, this is controlled by the system-wide setting tcp_retries2, but changing it will affect all outgoing traffic which is too disruptive. It seems that we want to override the number of attempts the OS does by default. It also shows the time until the next retransmission attempt (1min36sec) and the number of retransmissions so far (9) How should we fix this? “ss -o” command showing TCP retransmission timer (given the label on). Using tcpdumpand iptables we reproduced this 15-minute wait locally by opening a connection, blocking traffic to port 5432, and sending a query to the database. Searching for this error message in Postgresql code reveals that the suffix Connection timed out is coming directly from the OS which gives more credibility to the TCP retransmissions hypothesis. On these workers, the errors we saw were PQconsumeInput() could not receive data from server: Connection timed out We also saw this issue with background workers that don’t have application-level timeouts. The default settings yield a retransmission timeout that can reach up to 15 minutes which lines up very well with the timeouts we are seeing. Okay, so we have a smoking gun, but how does the increase in TCP retransmissions relate to the 15-minute requests?īy default, the Linux kernel will retransmit unacknowledged packets up to 15 times with exponential backoff, after that, it gives up and notifies the application that the connection is lost.

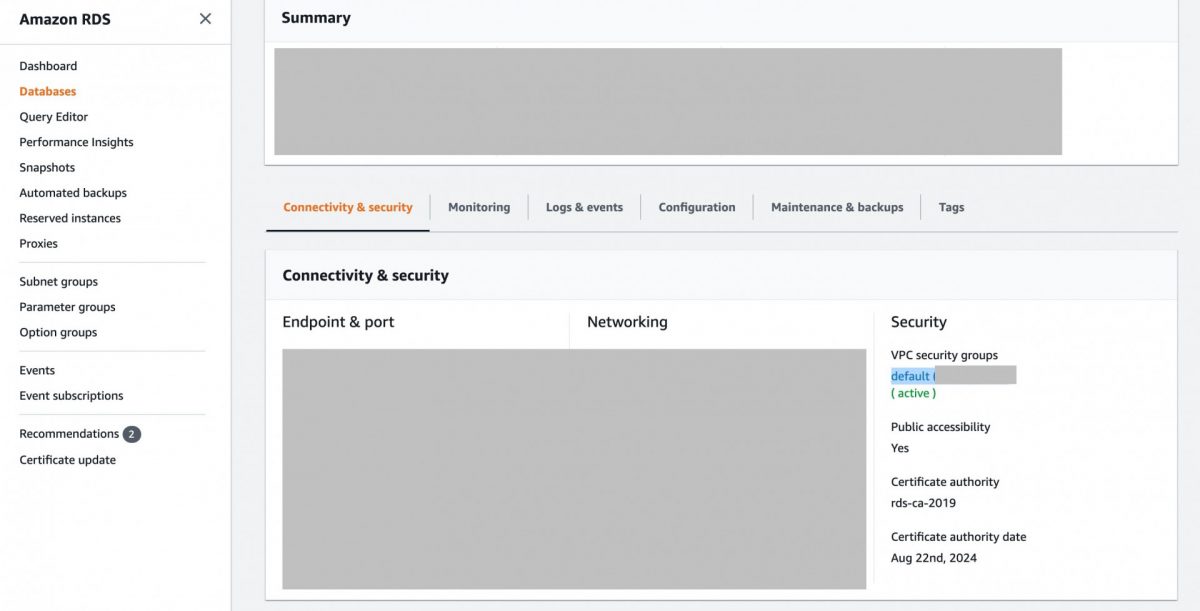

If the query is not assigned to a server during that time, the client is disconnected) so the query can’t spend more than a few seconds on Pgbouncer before being sent to the database or rejected with a query_wait_timeouterror.Ī more recent but much more severe retransmission spike/storm If it is not the database, maybe the query was blocked on Pgbouncer? That is also highly unlikely because we have a very short query_wait_timeout configured for our Pgbouncers (from Pgbouncer docs, query_wait_timeout is the maximum time queries are allowed to spend waiting for execution. Our general database access layout looks something like this Application → AWS Network Load Balancer → Pgbouncer → Database What’s more, we log slow queries using Postgres log_min_duration_statement setting, a query that takes 15 minutes was sure to pop up in that log but it didn’t. Now, this is impossible because we have a tight statement_timeout on Postgres on the scale of seconds (not minutes!).

What was particularly interesting here was that all 15-minute requests were blocked on what appeared to be a 15-minute database query.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed